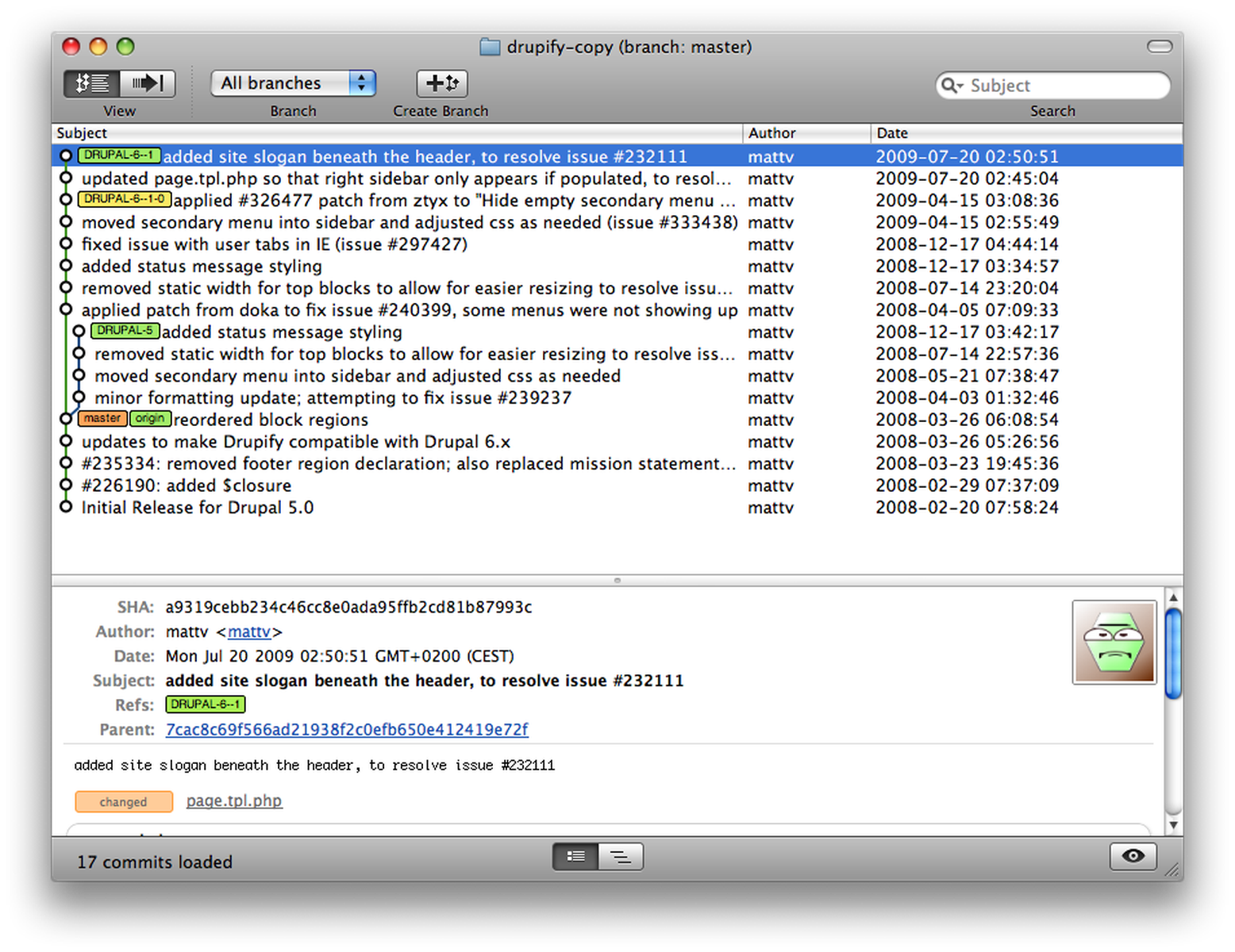

If the branch you are pushing has no common ancestors with the remote, it's going to pack and re-upload all the objects reachable from that branch. Git develops its manifests and packs up objects using only an exchange of references. So as you see, it only syncs one object (the commit object), and not the dummy file and its tree object again. Total 1 (delta 0), reused 0 (delta ~/git-test (branch11*) $ git push origin branch11 With my script above you get now following: ~/git-test (branch11*) $ # added new branch11 as explained at the very ~/git-test (branch11*) $ python git-sync.py refs/heads/branch11 Of course this has some drawbacks, but I described them in the linked Github repository. Server receives pack file, unpacks it and updates the ref with given commit SHA.Client builds a pack file based on the missing object SHAs and sends it to the server with the information which ref needs to be updated to which commit.Sends this list to the server, servers answers back SHAs of objects it does not have yet.Takes one commit (only one, you need to execute it on every unsynced parent commit as well) and builds a list of object SHAs (files, trees, commits) that belong to that commit (except parent commit).To work around that, I built a simple script every one can use to do a git push based on objects, instead of commits. So, according to the protocol itself, the explained behaviour above is correct. This is the problem: The pack protocol is not meant to be used per-object, but per-commit. When you execute a git push, the remote sends all existing references (branches) and its head commit SHA to the client. The solution is unfortunately not that simple.Įvery time Git wants to sync two repositories it builds a pack file, that contains all objects necessary (like files, commits, trees). Although I expect either that Git detects that the tree in the second commit is already present OR that file objects within that tree are already on the server.Īnswer (I can't answer anymore, since someone marked this as duplicate). Pushing both commits results in transferring all the objects in the same tree twice.

Those two commits have the exact same tree sha id (and thus reference the same object files).Two commits that do not share the same parents (have completely different history).# this uploads now again the dummy file (10MB), although the server # create new empty branch, not based from master # first push, uploads everything - makes sense $ git remote add origin create dummy 10MB file

I have low bandwidth and this really slows down my work. When I now have two experiments with almost the same files (and thus two references), Git pushes all objects again when I push those references. That commits hangs completely free in the air and gets then referenced with a new Git reference (e.g.

Every time I start an experiment, I add all necessary experiment files to a new Git tree, and use that tree in a new commit without branching. When I push one branch, I don't want to upload the common files again when I push the second branch.Īctual use-case: I do a lot of machine learning experiments using Python scripts and some smaller datasets (1MB - 10MB). Possible use-case: I have two completely different branches, but some common files are shared within those two. Git detects files by its sha, so it should be able to detect that some files in the tree of the commit are already present on the server. My question: How can I make it that Git detects that the 10mb file is already uploaded? Do you know a workaround/fix to make Git detecting already existing objects on the server when pushing commits? But what actual happens is that when a new branch is made all files (even when thousands of smaller source files, instead of one 10MB file) will be uploaded again and again. My expectations are, that the already uploaded files won't be uploaded again using git push.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed